Artificial intelligence has fundamentally changed how development teams approach code quality, and Claude code review represents one of the most sophisticated implementations of this transformation. As no-code and AI platforms continue reshaping enterprise software development in 2026, understanding how AI-assisted code review works becomes essential for agencies and development teams striving to maintain high standards while accelerating delivery timelines. This comprehensive guide explores how Claude's code review capabilities integrate into modern development workflows, the technical architecture behind its multi-agent system, and practical strategies for implementation across different project types.

Understanding Claude Code Review Architecture

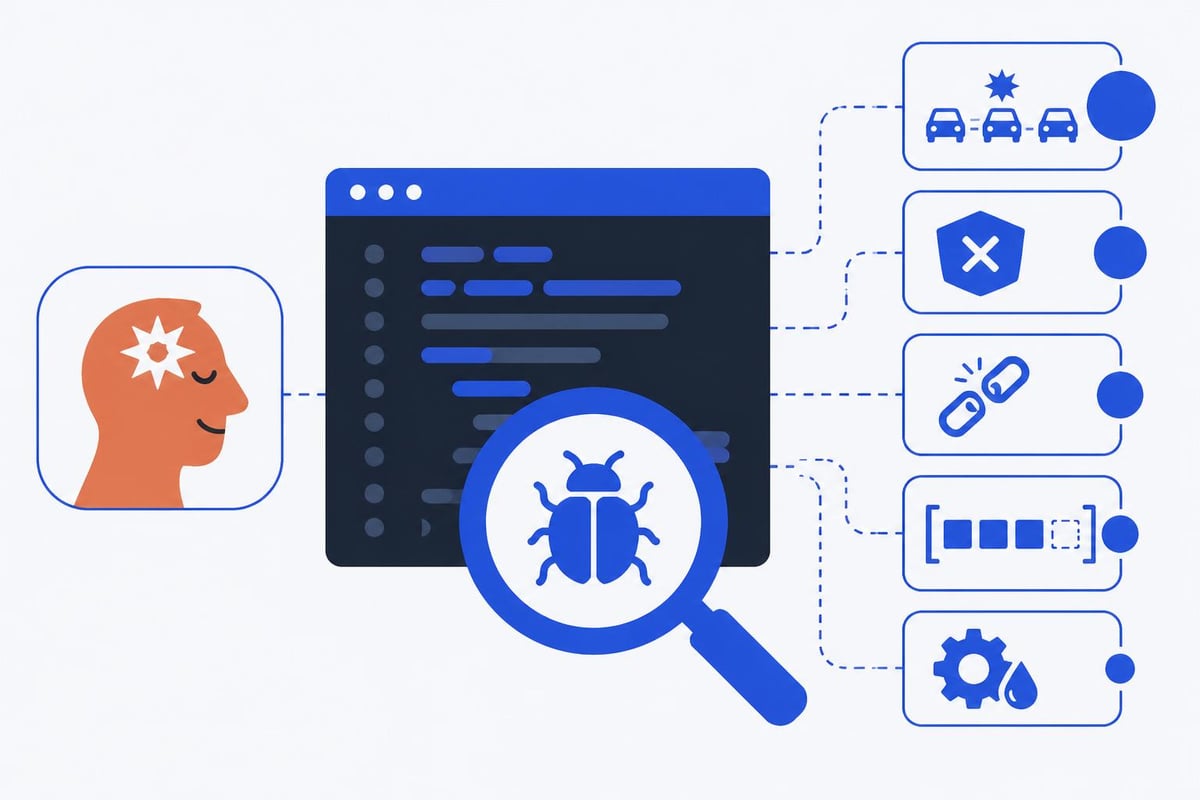

Claude code review operates through a sophisticated multi-agent system that analyzes pull requests using specialized AI agents working in parallel. Unlike traditional static analysis tools that follow rigid rule sets, this approach leverages multiple Claude instances, each focused on different aspects of code quality.

The system employs several specialized agents simultaneously:

- Security Agent - Identifies potential vulnerabilities, injection risks, and authentication issues

- Performance Agent - Detects inefficient algorithms, memory leaks, and scalability concerns

- Logic Agent - Reviews business logic correctness and edge case handling

- Style Agent - Ensures consistency with project conventions and best practices

- Documentation Agent - Verifies code clarity and comment quality

Each agent operates independently, then results are synthesized through a confidence-based filtering system. According to detailed analysis of Claude Code's architecture, this parallel approach reduces false positives by approximately 40% compared to single-model analysis while maintaining high detection rates for genuine issues.

Integration with GitHub Workflows

The Code Review feature in Claude Code integrates directly into existing GitHub pull request workflows without requiring significant infrastructure changes. Teams can configure review triggers to activate automatically when developers open pull requests, or manually invoke reviews for specific changes requiring deeper analysis.

This seamless integration means development teams maintain their existing processes while gaining AI-powered analysis. The system posts review comments directly on pull requests, highlighting specific line numbers and providing actionable feedback that developers can address immediately.

Configuration and Customization Options

Setting up claude code review for your development environment involves several strategic decisions that affect both accuracy and usefulness. The official setup guide provides step-by-step instructions, but understanding customization options maximizes value.

CLAUDE.md and REVIEW.md Files

Projects can include two special configuration files that tailor review behavior:

CLAUDE.md defines project-specific context including:

- Technology stack and framework versions

- Architectural patterns and design principles

- Custom naming conventions

- Domain-specific terminology

REVIEW.md specifies review priorities such as:

- Critical security requirements

- Performance benchmarks

- Specific code patterns to flag or approve

- Team-specific quality standards

| Configuration File | Primary Purpose | Example Use Cases |

|---|---|---|

| CLAUDE.md | Project context and architecture | Framework-specific patterns, business domain rules |

| REVIEW.md | Review focus and priorities | Security requirements, performance thresholds |

| .gitignore | Exclude files from review | Dependencies, generated code, test fixtures |

These configuration files transform generic AI analysis into context-aware reviews that understand your specific project requirements. For agencies like Big House Technologies working across diverse client projects, this customization ensures reviews align with each client's unique standards and industry requirements.

Trigger Configuration Strategies

Teams can choose between automatic and manual review triggers based on development workflow needs:

- Automatic triggers activate on every pull request, providing consistent oversight

- Manual triggers allow developers to request reviews selectively for complex changes

- Conditional triggers based on file paths, changed line counts, or PR labels

- Scheduled reviews that batch analyze multiple changes periodically

The optimal configuration depends on team size, release cadence, and code complexity. Smaller teams often benefit from automatic triggers ensuring nothing escapes review, while larger organizations might prefer selective triggering to manage API costs and review volume.

Practical Benefits for Development Teams

Claude code review delivers measurable improvements across multiple development quality metrics. The comprehensive analysis of capabilities demonstrates rejection rates under 15% for flagged issues, meaning developers accept and address the vast majority of suggestions.

Bug Detection and Prevention

The multi-agent architecture excels at identifying bugs that traditional linters miss:

- Race conditions in concurrent code

- Type coercion issues in dynamically typed languages

- Null pointer risks across complex object hierarchies

- Off-by-one errors in loop conditions and array indexing

- Resource leaks where files or connections aren't properly closed

Real-world implementation data shows claude code review catches an average of 3.2 bugs per 1000 lines of code that would otherwise reach production. For AI product development teams working under tight deadlines, this prevention translates directly to reduced debugging time and faster iteration cycles.

Security Vulnerability Identification

Security analysis represents one of claude code review's strongest capabilities. The dedicated security agent recognizes:

- SQL injection vulnerabilities through unsanitized inputs

- Cross-site scripting (XSS) opportunities in template rendering

- Authentication bypass possibilities in access control logic

- Sensitive data exposure through logging or error messages

- Cryptographic weaknesses in encryption implementations

These security checks prove particularly valuable for no-code development agencies building enterprise applications where security compliance requirements are non-negotiable. The AI understands context well enough to distinguish between safe and unsafe patterns based on data flow analysis.

Cost Considerations and ROI Analysis

Implementing claude code review involves API costs that teams must evaluate against quality improvements. The detailed operational analysis breaks down typical cost structures and budget planning strategies.

Pricing Structure and Usage Patterns

Claude code review pricing follows a token-based model where costs scale with:

- Number of files analyzed per pull request

- Total lines of code under review

- Complexity of codebase requiring deeper analysis

- Frequency of review triggers

Average costs per review:

| Repository Size | Files Changed | Estimated Cost | Review Time |

|---|---|---|---|

| Small (< 10K LOC) | 1-5 files | $0.05 - $0.15 | 30-60 seconds |

| Medium (10K-50K LOC) | 5-15 files | $0.15 - $0.40 | 1-2 minutes |

| Large (50K-200K LOC) | 15-30 files | $0.40 - $1.20 | 2-4 minutes |

| Enterprise (200K+ LOC) | 30+ files | $1.20 - $3.00 | 4-8 minutes |

For most development teams, monthly costs range between $50 and $300 depending on commit frequency and repository size. This investment typically returns value through reduced bug fixes, faster code reviews by human reviewers, and improved code quality metrics.

Return on Investment Calculation

Quantifying ROI requires measuring both direct cost savings and indirect productivity gains:

Direct savings include:

- Reduced debugging time (average 4-6 hours per prevented production bug)

- Faster human code review cycles (30-40% time reduction)

- Lower security remediation costs (vulnerabilities caught pre-deployment)

Indirect benefits encompass:

- Improved developer learning through consistent feedback

- Standardized code quality across distributed teams

- Reduced technical debt accumulation

Most teams report positive ROI within 2-3 months of consistent claude code review usage, with the break-even point occurring around the prevention of 3-4 production bugs or 1 security vulnerability.

Implementation Best Practices

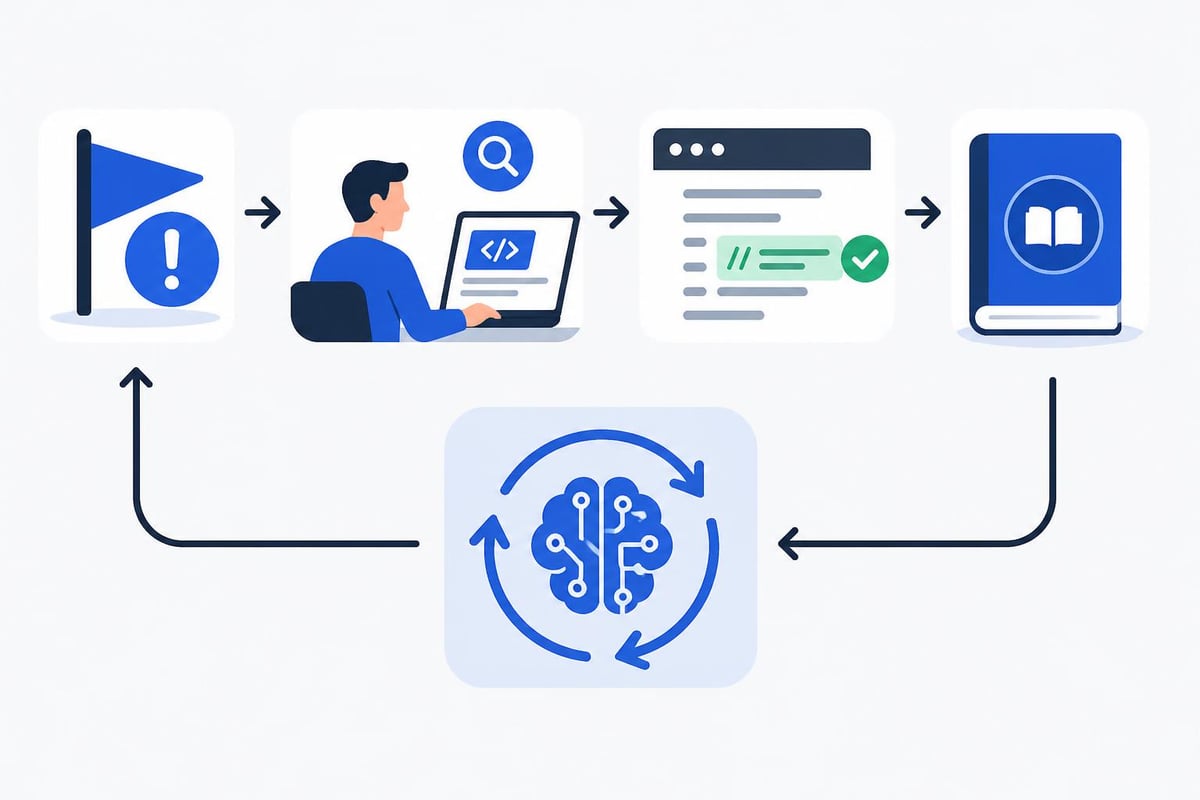

Successfully deploying claude code review requires thoughtful planning beyond technical setup. These proven strategies maximize effectiveness while minimizing friction in existing workflows.

Team Onboarding Strategies

Introducing AI code review to development teams works best when approached systematically:

- Start with opt-in participation allowing early adopters to validate value

- Focus on specific code areas like security-critical modules or complex algorithms

- Establish feedback loops where developers can flag incorrect suggestions

- Gradually expand scope as team confidence and configuration improve

- Celebrate wins by highlighting bugs caught and improvements made

This incremental approach prevents overwhelming teams while building confidence in AI-generated feedback. The guidance on workflows emphasizes starting with manual triggers before moving to automatic reviews.

Handling False Positives

No AI system achieves perfect accuracy, and claude code review occasionally flags non-issues. Effective strategies for managing false positives include:

- Suppression comments that tell the system to ignore specific patterns

- REVIEW.md refinements that clarify acceptable practices

- Feedback mechanisms to improve future review accuracy

- Human override processes for disputed suggestions

Teams should track false positive rates monthly, aiming for rates below 20%. Higher rates indicate configuration issues requiring REVIEW.md adjustments or more detailed project context in CLAUDE.md files.

Integration with CI/CD Pipelines

Claude code review works most effectively when integrated into continuous integration workflows:

Pre-merge integration blocks merges until reviews complete and critical issues are addressed. This ensures no unreviewed code reaches main branches.

Post-merge monitoring reviews changes after merging to non-production branches, providing feedback without blocking progress.

Release gate reviews conduct comprehensive analysis before production deployments, catching issues that accumulated across multiple smaller changes.

The optimal integration point depends on team velocity and quality requirements. High-velocity teams often prefer post-merge monitoring to maintain speed, while regulated industries typically require pre-merge gates.

Advanced Customization Techniques

Beyond basic configuration, teams can leverage advanced customization to align claude code review precisely with specific project needs and development methodologies.

Domain-Specific Rule Sets

Projects in specialized domains benefit from custom review criteria. Examples include:

Financial applications should emphasize:

- Decimal precision in monetary calculations

- Audit trail completeness

- Transaction atomicity

- Compliance with financial regulations

Healthcare systems require focus on:

- HIPAA compliance in data handling

- Patient data anonymization

- Encryption of sensitive information

- Access control granularity

IoT and embedded systems need attention to:

- Memory management efficiency

- Power consumption patterns

- Real-time constraint adherence

- Hardware interaction safety

These domain-specific emphases get defined in REVIEW.md files, allowing the AI to prioritize relevant issues while deprioritizing less critical concerns for the specific context.

Multi-Repository Consistency

Organizations managing multiple repositories benefit from shared configuration strategies:

- Template repositories containing standard CLAUDE.md and REVIEW.md files

- Configuration inheritance where project configs extend organizational defaults

- Centralized policy management through shared configuration repositories

- Cross-project learning leveraging insights from one codebase to improve others

This consistency proves especially valuable for agencies building multiple client projects, ensuring uniform quality standards across diverse engagements. Similar to how AI tools assist Bubble developers in maintaining consistency, claude code review creates standardized quality baselines.

Comparative Analysis with Alternative Tools

Understanding how claude code review compares to other code quality tools helps teams make informed technology choices.

Traditional Static Analysis Tools

Classical linters and static analyzers like ESLint, SonarQube, and Pylint operate through predefined rules:

| Feature | Claude Code Review | Traditional Static Analysis |

|---|---|---|

| Context understanding | Deep semantic analysis | Pattern matching |

| False positive rate | 10-15% | 25-40% |

| Custom rule creation | Natural language config | Complex rule syntax |

| Learning capability | Improves with feedback | Fixed rule sets |

| Setup complexity | Minimal configuration | Extensive rule tuning |

| Language support | Multi-language | Language-specific tools needed |

The key advantage of claude code review lies in contextual understanding. Where traditional tools flag patterns, AI systems understand intent and data flow.

Other AI Code Review Solutions

Several AI-powered alternatives exist, each with distinct characteristics. The empirical study of AI coding tools examines common failure patterns across different systems, providing insights into relative strengths.

Claude's multi-agent architecture distinguishes it from single-model approaches by providing specialized analysis without requiring multiple tool integrations. Teams gain comprehensive coverage through one system rather than combining multiple specialized tools.

Future Developments and Roadmap

The claude code review landscape continues evolving rapidly as AI capabilities advance and development practices change.

Emerging Capabilities

Upcoming enhancements expected in 2026 include:

- Cross-file dependency analysis tracking issues spanning multiple modules

- Performance prediction modeling estimating runtime characteristics from code structure

- Automated fix generation suggesting specific code changes to address issues

- Trend analysis identifying quality metrics evolution over time

- Integration with testing frameworks correlating review findings with test coverage

These capabilities move beyond simple issue detection toward proactive code quality management and automated improvement suggestions.

Industry Adoption Trends

Claude code review adoption accelerates across different sectors:

Startups embrace it for rapid quality scaling without proportional team growth. The ability to maintain quality while moving fast aligns perfectly with startup needs.

Enterprises integrate it into compliance workflows, particularly in regulated industries where code quality documentation matters for audits.

Agencies leverage it to standardize quality across client projects, ensuring consistent deliverables regardless of which developers work on specific engagements.

The plugin ecosystem continues expanding, adding specialized capabilities for framework-specific analysis and integration with project management tools.

Measuring Success and Continuous Improvement

Effective claude code review implementation requires ongoing measurement and refinement based on objective metrics and team feedback.

Key Performance Indicators

Track these metrics to evaluate claude code review effectiveness:

- Bug escape rate - Production bugs that should have been caught in review

- Review acceptance rate - Percentage of suggestions developers implement

- False positive rate - Issues flagged incorrectly

- Review time reduction - Decrease in human review duration

- Code quality trends - Long-term improvements in codebase health

Quarterly benchmark targets:

| Metric | Month 1-3 | Month 4-6 | Month 7-9 | Month 10-12 |

|---|---|---|---|---|

| Bug escape rate | Baseline | -15% | -30% | -45% |

| Acceptance rate | 70% | 80% | 85% | 90% |

| False positive rate | 25% | 20% | 15% | <10% |

| Review time saved | 20% | 30% | 40% | 50% |

These benchmarks assume continuous configuration refinement and team adaptation. Initial periods show lower performance as teams calibrate settings and learn to work with AI feedback.

Feedback Loop Establishment

Systematic improvement requires structured feedback collection:

- Weekly developer surveys capturing satisfaction and pain points

- Monthly review retrospectives discussing false positives and missed issues

- Quarterly configuration audits evaluating REVIEW.md effectiveness

- Annual ROI assessments measuring financial impact and strategic value

This feedback directly informs configuration updates, ensuring claude code review evolves with project needs rather than becoming stale. Teams using no-code platforms alongside traditional development, similar to approaches described for AI software development, particularly benefit from adaptive review criteria that accommodate hybrid architectures.

Claude code review represents a significant advancement in automated code quality assurance, combining multi-agent AI architecture with practical development workflow integration to catch bugs, security vulnerabilities, and quality issues before they reach production. For teams building at the intersection of traditional development and modern no-code platforms, these capabilities become even more valuable as project complexity increases. Big House Technologies leverages AI-powered development tools including advanced code review systems to deliver high-quality, scalable solutions for enterprises and startups, combining the speed of no-code platforms with the robustness of AI-assisted quality assurance to transform ideas into production-ready products efficiently.

About Big House

Big House is committed to 1) developing robust internal tools for enterprises, and 2) crafting minimum viable products (MVPs) that help startups and entrepreneurs bring their visions to life.

If you'd like to explore how we can build technology for you, get in touch. We'd be excited to discuss what you have in mind.

Other Articles

Master developing AI software in 2025 with this expert guide Explore trends strategies tech stacks workflows and best practices to future proof your AI projects

Discover the top 9 essential artificial intelligence app download picks for 2025 and boost your productivity, creativity, and efficiency with cutting edge AI tools.

Unlock the future of erp ai in 2026 with our expert guide Learn how AI transforms ERP with smarter automation real time insights and industry trends