The computing landscape is experiencing a fundamental shift as artificial intelligence becomes deeply embedded into the core architecture of operating systems. What began as standalone AI features bolted onto existing platforms has evolved into something far more transformative: the artificial intelligence operating system. This new breed of software fundamentally rethinks how machines interact with users, process information, and make decisions. For businesses evaluating AI software development strategies in 2026, understanding these AI-native platforms is essential for maintaining competitive advantage.

Understanding the Artificial Intelligence Operating System

An artificial intelligence operating system represents a paradigm shift from traditional computing architectures. Rather than treating AI as an application layer or optional feature, these systems integrate machine learning, natural language processing, and predictive analytics directly into the operating system kernel.

The concept extends beyond simply adding voice assistants or automated recommendations. Microsoft's commitment to making Windows 11 AI-native illustrates how major technology companies are rebuilding their platforms from the ground up with AI at the center. This transformation affects everything from memory management to file systems, application prioritization to security protocols.

Key Characteristics of AI-Native Operating Systems

Modern artificial intelligence operating systems share several defining characteristics that distinguish them from conventional platforms:

- Adaptive resource allocation that learns usage patterns and optimizes performance automatically

- Predictive task management anticipating user needs before explicit commands

- Context-aware interfaces that adjust based on user behavior, time of day, and workflow patterns

- Integrated machine learning enabling applications to share insights and improve collectively

- Natural language interaction as a primary input method alongside traditional controls

These capabilities fundamentally change how businesses approach software development. Organizations implementing AI-powered no-code development tools benefit significantly when building on AI-native platforms, as the operating system itself provides intelligence infrastructure that applications can leverage.

The Business Case for AI Operating Systems

Organizations evaluating whether to adopt an artificial intelligence operating system face important strategic considerations. The decision impacts not just IT infrastructure but core business operations and competitive positioning.

Operational Efficiency Gains

AI-native operating systems deliver measurable efficiency improvements across multiple dimensions. Resource utilization improves through intelligent scheduling that predicts workload patterns. Security enhances through behavioral analysis that identifies anomalies before they become breaches. Employee productivity increases when routine tasks automate based on learned preferences.

Consider a development team building applications on an AI operating system. The platform anticipates resource needs during peak compilation times, pre-allocates processing capacity, and optimizes development environment configurations automatically. This reduces wait times and accelerates the development cycle without manual intervention.

| Efficiency Area | Traditional OS | AI Operating System | Improvement |

|---|---|---|---|

| Resource allocation | Static/Manual | Predictive/Automatic | 35-45% |

| Security response time | Reactive | Proactive | 60-70% |

| Task automation | Rule-based | Learning-based | 50-60% |

| User productivity | Standard | Personalized | 25-35% |

Integration with No-Code Platforms

The synergy between artificial intelligence operating systems and no-code development platforms creates powerful opportunities for rapid application deployment. Platforms like Bubble and Lovable already incorporate significant AI capabilities, but when running on an AI-native OS, these tools gain enhanced performance and additional intelligence layers.

MVP software development accelerates dramatically when the underlying operating system provides built-in AI services. Features like image recognition, natural language understanding, and predictive analytics become operating system utilities rather than third-party integrations requiring complex configuration.

Major Players and Platform Options

The artificial intelligence operating system market is rapidly evolving, with established technology giants and innovative startups competing to define the future of computing.

Enterprise Solutions

Microsoft leads enterprise adoption with its AI-native Windows evolution. The platform integrates Copilot capabilities across all system functions, providing businesses with familiar interfaces enhanced by intelligent automation. According to PC Gamer's analysis, the company faces branding challenges as it navigates the complex landscape of AI-integrated computing.

Google is developing multiple AI operating system initiatives. Tom's Guide reports on Aluminium OS, an Android-based platform designed with AI at its core. This system aims to unify Google's operating system strategy while providing seamless AI integration across devices.

Apple Intelligence represents Apple's approach to AI operating systems. The comprehensive suite of AI tools integrates deeply into iOS, iPadOS, and macOS, providing privacy-focused intelligence that learns user patterns while maintaining data security.

Emerging Platforms

Newer entrants are taking bold approaches to reimagining what an artificial intelligence operating system can be. Nothing's AI-native OS plans emphasize personalized experiences that adapt dynamically to individual users across multiple device types.

Experimental projects push boundaries further. VibeOS represents an operating system built entirely by generative AI, demonstrating both the potential and challenges of AI-generated software at the operating system level.

Implementation Strategies for Businesses

Successfully adopting an artificial intelligence operating system requires careful planning and strategic execution. Organizations cannot simply swap operating systems without considering broader implications for their technology stack and business processes.

Assessment and Planning

Begin with a thorough assessment of current infrastructure and business requirements. Evaluate which workloads benefit most from AI-native capabilities and which applications require traditional computing paradigms.

Critical assessment areas include:

- Application compatibility - Identify software requiring updates or replacements

- Data infrastructure - Ensure systems can support AI processing requirements

- Skills inventory - Determine training needs for IT staff and end users

- Security compliance - Verify AI operating systems meet regulatory requirements

- Integration points - Map connections with existing business systems

Organizations investing in AI product development tools for startups should prioritize platforms that align with their chosen artificial intelligence operating system to maximize compatibility and performance.

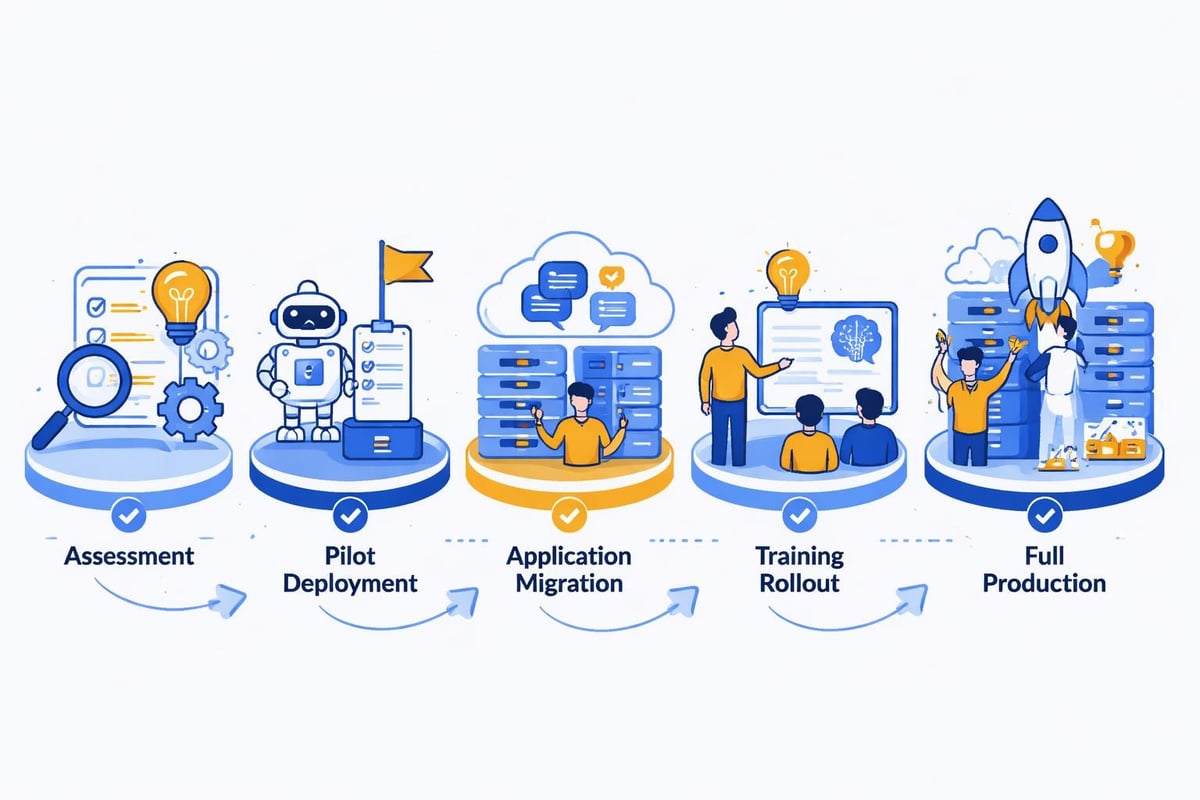

Pilot Programs and Rollout

Deploy pilot programs targeting specific departments or use cases before organization-wide implementation. Development teams make excellent pilot candidates because they can provide detailed technical feedback and adapt quickly to new workflows.

A staged rollout approach minimizes disruption while building organizational confidence. Start with non-critical systems, gather performance data, refine configurations based on real-world usage, then expand to mission-critical applications.

Development Considerations for AI-Native Platforms

Building applications for an artificial intelligence operating system requires developers to think differently about architecture, user experience, and performance optimization.

Leveraging Native AI Capabilities

Modern artificial intelligence operating systems expose APIs and services that applications can consume without implementing AI functionality from scratch. Natural language processing, computer vision, predictive analytics, and recommendation engines become platform services rather than application responsibilities.

This architectural shift parallels the no-code revolution transforming software development. Just as no-code platforms abstract complex coding tasks, AI operating systems abstract complex intelligence tasks, letting developers focus on business logic rather than machine learning implementation.

Performance Optimization

Applications running on AI operating systems benefit from intelligent resource management, but developers must design with AI capabilities in mind. Traditional performance optimization focuses on minimizing resource consumption. AI-native development balances resource usage with the value gained from AI processing.

Optimization strategies include:

- Designing interfaces that adapt based on AI-driven user insights

- Implementing progressive intelligence that enhances features as the system learns

- Building feedback loops that improve AI models through application usage

- Structuring data to maximize AI processing efficiency

- Creating fallback behaviors when AI services become unavailable

User Experience Design

The artificial intelligence operating system changes fundamental assumptions about user interface design. Static layouts give way to adaptive interfaces that reconfigure based on context, usage patterns, and predicted user intent.

Design teams must embrace uncertainty and variation rather than pixel-perfect consistency. Interfaces become conversations rather than forms, with the operating system mediating interactions based on learned user preferences.

Security and Privacy Implications

The artificial intelligence operating system introduces new security paradigms alongside new vulnerabilities. Understanding both is essential for enterprise deployment.

Enhanced Security Capabilities

AI-native platforms provide sophisticated threat detection through behavioral analysis. Rather than matching known attack signatures, these systems identify anomalous patterns that indicate potential security breaches. This proactive approach catches zero-day exploits and insider threats that evade traditional security tools.

Privacy protection also benefits from AI capabilities. Federated learning techniques enable operating systems to personalize experiences while keeping sensitive data local rather than transmitted to cloud services. Apple Intelligence demonstrates this approach, processing most AI tasks on-device to maintain privacy.

New Vulnerability Surfaces

Conversely, artificial intelligence operating systems introduce attack vectors that don't exist in traditional platforms. Adversarial attacks can manipulate AI decision-making processes. Model poisoning compromises the training data that shapes AI behavior. Extraction attacks steal proprietary AI models embedded in the operating system.

| Security Concern | Traditional OS Risk | AI OS Risk | Mitigation Strategy |

|---|---|---|---|

| Malware detection | Signature-based | Behavior-based | Multi-layer validation |

| Data privacy | Access controls | Inference attacks | Differential privacy |

| System manipulation | Code injection | Model poisoning | Continuous monitoring |

| API exploitation | Standard exploits | AI service abuse | Rate limiting + anomaly detection |

Organizations must implement comprehensive security frameworks that address both traditional and AI-specific threats when deploying these platforms.

Cost Considerations and ROI

Evaluating the financial impact of adopting an artificial intelligence operating system requires looking beyond simple licensing costs to total cost of ownership and business value generation.

Direct and Indirect Costs

Direct costs include:

- Operating system licenses and subscription fees

- Hardware upgrades to support AI processing requirements

- Training programs for IT staff and end users

- Consulting services for migration and optimization

- Compatibility testing and application modifications

Indirect costs encompass:

- Productivity losses during transition periods

- Potential security incidents during learning phases

- Opportunity costs of delaying other IT initiatives

- Risk mitigation for failed deployments

Value Generation and Savings

Organizations implementing artificial intelligence operating systems typically realize value through multiple channels. Automation of routine tasks reduces labor costs while improving consistency. Predictive maintenance prevents expensive system failures. Enhanced decision support improves business outcomes across operations.

Development efficiency gains prove particularly significant. Teams building on AI-native platforms complete projects faster with fewer resources. When combined with no-code development approaches, organizations achieve development velocity previously impossible with traditional methods.

Future Trends and Predictions

The artificial intelligence operating system market continues evolving rapidly. Several trends will shape platform development and business adoption through 2026 and beyond.

Increasing Specialization

General-purpose AI operating systems will coexist with specialized platforms optimized for specific industries or use cases. Healthcare organizations may adopt operating systems trained on medical workflows and compliance requirements. Manufacturing facilities might implement platforms specialized for industrial control systems and supply chain optimization.

This specialization mirrors trends in application software, where vertical solutions increasingly outperform horizontal platforms for industry-specific needs.

Edge Computing Integration

As TechDecent explores AI operating system architecture, the shift toward distributed intelligence continues accelerating. Future artificial intelligence operating systems will seamlessly span cloud data centers, edge computing nodes, and local devices, distributing AI processing based on latency requirements, bandwidth constraints, and privacy considerations.

Autonomous Operations

Operating systems will transition from assisting users to operating autonomously in many scenarios. System maintenance, security patching, resource optimization, and even application deployment will occur without human intervention, with AI making decisions based on learned organizational patterns and defined constraints.

Standardization and Interoperability

As the concept of AI operating systems matures, industry standards will emerge governing how these platforms expose AI capabilities, share models, and interoperate. This standardization will reduce vendor lock-in and enable organizations to mix components from multiple providers.

Selecting the Right Platform for Your Organization

Choosing an artificial intelligence operating system represents a strategic decision with long-term implications. Organizations should evaluate options against multiple criteria aligned with business objectives.

Evaluation Framework

Technical compatibility tops most evaluation lists. Assess how well each platform integrates with existing infrastructure, supports required applications, and aligns with architectural standards. Organizations standardized on Microsoft ecosystems face different considerations than those heavily invested in Google or Apple platforms.

AI capability alignment determines whether platform intelligence matches business needs. Some operating systems excel at natural language processing while others prioritize computer vision or predictive analytics. Match capabilities to use cases driving adoption.

Vendor ecosystem influences long-term success. Platforms with rich developer communities, extensive partner networks, and comprehensive support resources reduce implementation risk. Organizations building scalable software solutions benefit from ecosystems that support both traditional development and emerging no-code approaches.

Total cost of ownership extends beyond licensing to include hardware, training, migration, and ongoing operational expenses. Calculate realistic ROI timelines accounting for both hard costs and soft benefits.

Building Internal Capabilities

Successful artificial intelligence operating system adoption requires developing organizational capabilities beyond IT infrastructure. Teams need training in AI concepts, data management practices, and new development methodologies.

Creating centers of excellence focused on AI-native development helps concentrate expertise and accelerate learning. These teams can pilot implementations, develop best practices, and train broader organizations in leveraging platform capabilities.

Partnership with specialized agencies provides another capability-building path. Organizations can accelerate time-to-value by working with experts who understand both AI operating systems and specific business domains.

Integration with Modern Development Practices

The artificial intelligence operating system fundamentally changes software development workflows, particularly when combined with no-code and low-code platforms that already emphasize rapid delivery and business user empowerment.

DevOps and Continuous Delivery

AI-native platforms enhance DevOps practices through intelligent automation of build, test, and deployment processes. Continuous integration pipelines become smarter, automatically optimizing test coverage based on code changes, predicting integration issues before they occur, and selecting deployment strategies based on historical performance data.

These capabilities compress development cycles while improving quality. Teams ship features faster with greater confidence, responding to market demands more effectively than competitors using traditional approaches.

No-Code Synergies

The intersection of artificial intelligence operating systems and no-code development creates particularly powerful opportunities. No-code platforms like Bubble already democratize application development by eliminating coding requirements. When these platforms run on AI-native operating systems, they gain additional intelligence that further simplifies development.

Visual development interfaces become more intuitive through AI assistance. The operating system suggests component configurations, identifies potential design issues, and even generates workflow logic based on natural language descriptions. This acceleration proves especially valuable for MVP development where speed to market drives success.

Artificial intelligence operating systems represent the next evolution in computing, fundamentally transforming how businesses build, deploy, and operate software solutions. Organizations that understand these platforms and strategically adopt them position themselves for competitive advantage in an increasingly AI-driven marketplace. Whether you're exploring AI-native development or seeking to modernize legacy systems, Big House Technologies brings deep expertise in both no-code platforms and AI integration to help you navigate this transformation and deliver scalable solutions that leverage the latest operating system capabilities.

About Big House

Big House is committed to 1) developing robust internal tools for enterprises, and 2) crafting minimum viable products (MVPs) that help startups and entrepreneurs bring their visions to life.

If you'd like to explore how we can build technology for you, get in touch. We'd be excited to discuss what you have in mind.

Other Articles

Discover how AI operating systems are transforming computing in 2026. Learn about AI-native platforms, implementation strategies, and business applications.

Discover how to choose the right software development company in 2026. Learn about AI-powered development, no-code platforms, and cost-efficient solutions.

Master application and software development in 2025 with this expert guide. Discover trends, best practices, tools, and step-by-step strategies for success.